1、Kubernetes证书配置

有疑问的话,就对照Kubernetes 分布式安装(3)证书配置

配置过程也大差不差

root@k8s-master-u2404-4-20-101:~# mkdir TLS/k8s

root@k8s-master-u2404-4-20-101:~# cd TLS/k8s/

root@k8s-master-u2404-4-20-101:~/TLS/k8s# vim ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

root@k8s-master-u2404-4-20-101:~/TLS/k8s# vim ca-csr.json

{

"CN": "kubernetes CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "Beijing",

"L": "Beijing",

"O": "myc",

"OU": "openssl"

}

]

}

获得以下产物

root@k8s-master-u2404-4-20-101:~/TLS/k8s# ls

ca-config.json ca-csr.json

root@k8s-master-u2404-4-20-101:~/TLS/k8s# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2025/07/21 11:15:50 [INFO] generating a new CA key and certificate from CSR

2025/07/21 11:15:50 [INFO] generate received request

2025/07/21 11:15:50 [INFO] received CSR

2025/07/21 11:15:50 [INFO] generating key: rsa-2048

2025/07/21 11:15:50 [INFO] encoded CSR

2025/07/21 11:15:50 [INFO] signed certificate with serial number 400928798311424467130481472047250980132669378198

root@k8s-master-u2404-4-20-101:~/TLS/k8s# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem签发server证书

文件hosts字段中IP为所有Master/LB/VIP IP,一个都不能少!为了方便后期扩容可以多写几个预留的IP。

root@k8s-master-u2404-4-20-101:~/TLS/k8s# vim server-csr.json

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"172.16.101.101",

"172.16.101.102",

"172.16.101.103",

"172.16.101.104",

"172.16.101.105",

"172.16.101.106",

"172.16.101.107",

"172.16.101.108",

"172.16.101.109",

"172.16.101.110",

"172.16.101.111",

"172.16.101.112",

"172.16.101.113",

"172.16.101.114",

"172.16.101.115",

"172.16.101.116",

"172.16.101.117",

"172.16.101.118",

"172.16.101.119",

"172.16.101.120",

"172.16.101.121",

"172.16.101.122",

"172.16.101.123",

"172.16.101.124",

"172.16.101.125",

"172.16.101.126",

"172.16.101.127",

"172.16.101.128",

"172.16.101.129",

"172.16.101.130",

"172.16.101.131",

"172.16.101.132",

"172.16.101.133",

"172.16.101.134",

"172.16.101.135",

"172.16.101.136",

"172.16.101.137",

"172.16.101.138",

"172.16.101.139",

"172.16.101.140",

"172.16.101.141",

"172.16.101.142",

"172.16.101.143",

"172.16.101.144",

"172.16.101.145",

"172.16.101.146",

"172.16.101.147",

"172.16.101.148",

"172.16.101.149",

"172.16.101.150",

"172.16.101.151",

"172.16.101.152",

"172.16.101.153",

"172.16.101.154",

"172.16.101.155",

"172.16.101.156",

"172.16.101.157",

"172.16.101.158",

"172.16.101.159",

"172.16.101.160",

"172.16.101.161",

"172.16.101.162",

"172.16.101.163",

"172.16.101.164",

"172.16.101.165",

"172.16.101.166",

"172.16.101.167",

"172.16.101.168",

"172.16.101.169",

"172.16.101.170",

"172.16.101.171",

"172.16.101.172",

"172.16.101.173",

"172.16.101.174",

"172.16.101.175",

"172.16.101.176",

"172.16.101.177",

"172.16.101.178",

"172.16.101.179",

"172.16.101.180",

"172.16.101.181",

"172.16.101.182",

"172.16.101.183",

"172.16.101.184",

"172.16.101.185",

"172.16.101.186",

"172.16.101.187",

"172.16.101.188",

"172.16.101.189",

"172.16.101.190",

"172.16.101.191",

"172.16.101.192",

"172.16.101.193",

"172.16.101.194",

"172.16.101.195",

"172.16.101.196",

"172.16.101.197",

"172.16.101.198",

"172.16.101.199",

"172.16.101.200",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "myc",

"OU": "k8sSystem"

}

]

}

root@k8s-master-u2404-4-20-101:~/TLS/k8s# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

2025/07/21 11:21:12 [INFO] generate received request

2025/07/21 11:21:12 [INFO] received CSR

2025/07/21 11:21:12 [INFO] generating key: rsa-2048

2025/07/21 11:21:12 [INFO] encoded CSR

2025/07/21 11:21:12 [INFO] signed certificate with serial number 147340516391319671056201904911995967079368136716

root@k8s-master-u2404-4-20-101:~/TLS/k8s# ls

ca-config.json ca-csr.json ca.pem server-csr.json server.pem

ca.csr ca-key.pem server.csr server-key.pem2、kube-apiserver 配置

官网

下载地址

https://dl.k8s.io/v1.30.2/kubernetes-server-linux-amd64.tar.gz

这几个服务的部署是最容易出问题的,我会把我遇见的问题写在最后,可以对照一下

但是因为linux或者k8s甚至平台的区别会有很多不一致,我见过最典型的就是没有iptable命令

还是简单介绍一下吧

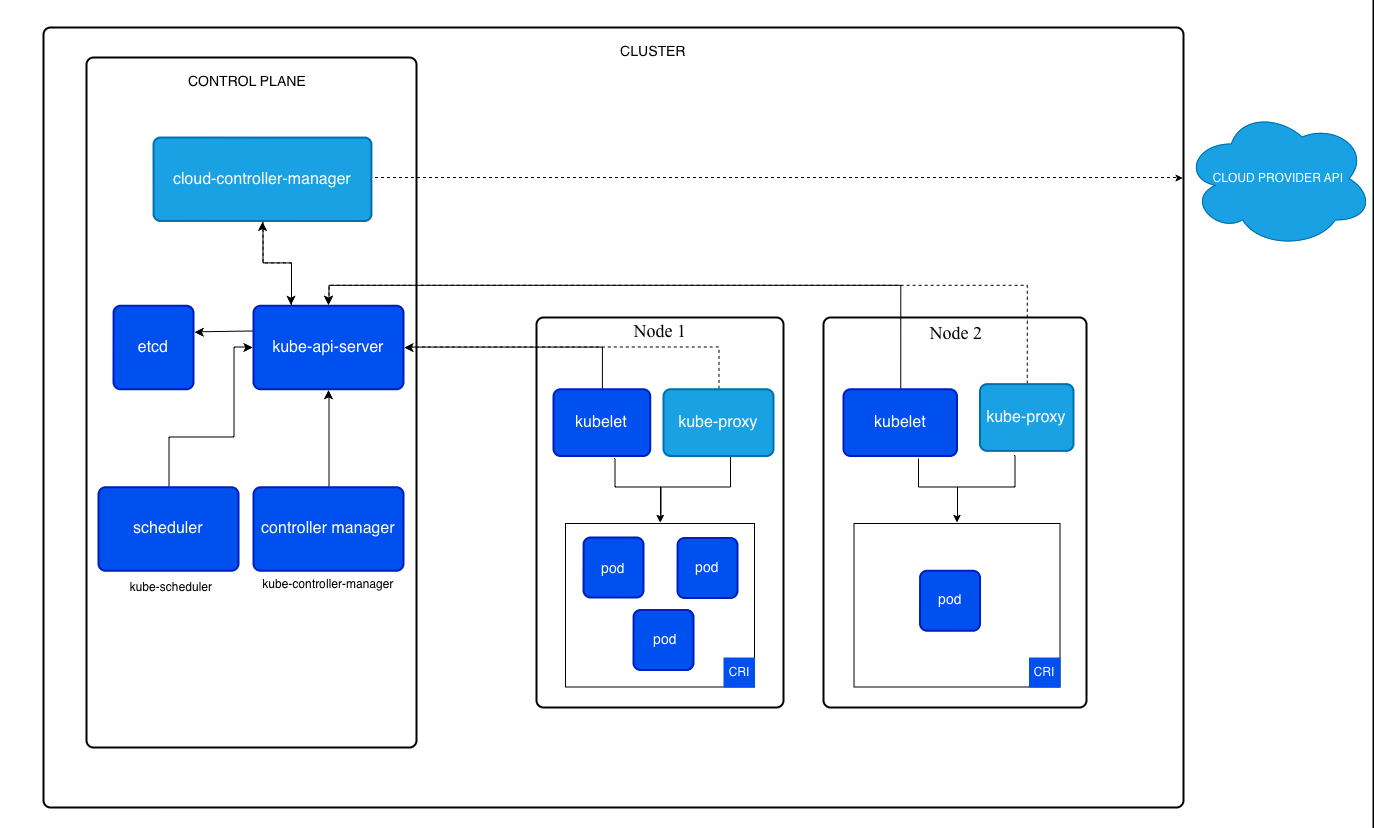

apiserver 是 Kubernetes 控制平面的组件, 该组件负责公开了 Kubernetes API,负责处理接受请求的工作。 API 服务器是Kubernetes 控制平面的前端。

root@k8s-master-u2404-4-20-101:~# mkdir kubernetes_install

root@k8s-master-u2404-4-20-101:~# cd kubernetes_install/

root@k8s-master-u2404-4-20-101:~/kubernetes_install# wget https://dl.k8s.io/v1.30.2/kubernetes-server-linux-arm64.tar.gz

root@k8s-master-u2404-4-20-101:~/kubernetes_install# mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

root@k8s-master-u2404-4-20-101:~/kubernetes_install# tar xf kubernetes-server-linux-arm64.tar.gz

root@k8s-master-u2404-4-20-101:~/kubernetes_install# cp kubernetes/server/bin/kube-apiserver kubernetes/server/bin/kube-controller-manager kubernetes/server/bin/kube-scheduler /opt/kubernetes/bin/

root@k8s-master-u2404-4-20-101:~/kubernetes_install# cp kubernetes/server/bin/kubectl /usr/bin/

root@k8s-master-u2404-4-20-101:~/kubernetes_install# cp kubernetes/server/bin/kubectl /usr/local/bin/kube-apiserver.conf

root@k8s-master-u2404-4-20-101:~/kubernetes_install# vim /opt/kubernetes/cfg/kube-apiserver.conf

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \

--v=2 \

--etcd-servers=https://172.16.101.101:2379,https://172.16.101.102:2379,https://172.16.101.103:2379 \

--bind-address=172.16.101.101 \

--secure-port=6443 \

--advertise-address=172.16.101.101 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth=true \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-32767 \

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--service-account-issuer=api \

--service-account-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \

--proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \

--proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \

--requestheader-allowed-names=kubernetes \

--requestheader-extra-headers-prefix=X-Remote-Extra- \

--requestheader-group-headers=X-Remote-Group \

--requestheader-username-headers=X-Remote-User \

--enable-aggregator-routing=true \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--service-account-issuer=https://kubernetes.default.svc.cluster.local \

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

#---v:日志等级

#--etcd-servers:etcd集群地址

#--bind-address:监听地址

#--secure-port:https安全端口

#--advertise-address:集群通告地址

#--allow-privileged:启用授权

#--service-cluster-ip-range:Service虚拟IP地址段

#--enable-admission-plugins:准入控制模块

#--authorization-mode:认证授权,启用RBAC授权和节点自管理

#--enable-bootstrap-token-auth:启用TLS bootstrap机制

#--token-auth-file:bootstrap token文件

#--service-node-port-range:Service nodeport类型默认分配端口范围

#--kubelet-client-xxx:apiserver访问kubelet客户端证书

#--tls-xxx-file:apiserver https证书

#1.20版本必须加的参数:--service-account-issuer,--service-account-signing-key-file

#--etcd-xxxfile:连接Etcd集群证书

#--audit-log-xxx:审计日志

#启动聚合层相关配置:--requestheader-client-ca-file,--proxy-client-cert-file,--proxy-client-key-file,--requestheader-allowed-names,--requestheader-extra-headers-prefix,--requestheader-group-headers,--requestheader-username-headers,--enable-aggregator-routing

拷贝一下证书

root@k8s-master-u2404-4-20-101:~/kubernetes_install# cp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem /opt/kubernetes/ssl/

root@k8s-master-u2404-4-20-101:~/kubernetes_install# ls /opt/kubernetes/ssl/

ca-key.pem ca.pem server-key.pem server.pemTLS Bootstrapping

TLS Bootstraping:Master apiserver启用TLS认证后,Node节点kubelet和 kube-proxy要与kube-apiserver进行通信,必须使用CA签发的有效证书才可以, 当Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。 为了简化流程,Kubernetes引入了TLS bootstraping机制来自动颁发客户端证书, kubelet会以一个低权限用户自动向apiserver申请证书, kubelet的证书由apiserver动态签署。 所以强烈建议在Node上使用这种方式,目前主要用于kubelet,kube-proxy 还是由我们统一颁发一个证书。

--token-auth-file=/opt/kubernetes/cfg/token.csv

格式:token,用户名,UID,用户组

root@k8s-master-u2404-4-20-101:~/kubernetes_install# head -c 16 /dev/urandom | od -An -t x | tr -d ' '

af0d25ea1e38abacfecc5270450ea3c3

root@k8s-master-u2404-4-20-101:~/kubernetes_install# vim /opt/kubernetes/cfg/token.csv

af0d25ea1e38abacfecc5270450ea3c3,kubelet-bootstrap,10001,"system:node-bootstrapper"kube-apiserver.service

root@k8s-master-u2404-4-20-101:~/kubernetes_install# vim /usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

root@k8s-master-u2404-4-20-101:~/kubernetes_install# systemctl daemon-reload

root@k8s-master-u2404-4-20-101:~/kubernetes_install# systemctl enable --now kube-apiserver

Created symlink /etc/systemd/system/multi-user.target.wants/kube-apiserver.service → /usr/lib/systemd/system/kube-apiserver.service.

root@k8s-master-u2404-4-20-101:~/kubernetes_install# systemctl status kube-apiserver.service

● kube-apiserver.service - Kubernetes API Server

Loaded: loaded (/usr/lib/systemd/system/kube-apiserver.service; enabled; preset: enabled)

Active: active (running) since Mon 2025-07-21 12:21:28 CST; 26s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 3463 (kube-apiserver)

Tasks: 8 (limit: 4550)

Memory: 187.7M (peak: 188.2M)

CPU: 1.779s

CGroup: /system.slice/kube-apiserver.service

└─3463 /opt/kubernetes/bin/kube-apiserver --enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota --v=2 --etcd-servers=https://172.16.101.101:2379,https://172.16.101.102:2379,https://172.16.101.103:2379 --bind-address=172.16.101.101 --secure-port=6443 --advertise-address=172.16.101.101 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --authorization-mode=RBAC,Node --enable-bootstrap-token-auth=true --token-auth-file=/opt/kubernetes/cfg/token.csv --service-node-port-range=30000-32767 --kubelet-client-certificate=/opt/kubernetes/ssl/server.pem --kubelet-client-key=/opt/kubernetes/ssl/server-key.pem --tls-cert-file=/opt/kubernetes/ssl/server.pem --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem --client-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem --service-account-issuer=api --service-account-signing-key-file=/opt/kubernetes/ssl/ca-key.pem --etcd-cafile=/opt/etcd/ssl/ca.pem --etcd-certfile=/opt/etcd/ssl/server.pem --etcd-keyfile=/opt/etcd/ssl/server-key.pem --requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem --proxy-client-cert-file=/opt/kubernetes/ssl/server.pem --proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem --requestheader-allowed-names=kubernetes --requestheader-extra-headers-prefix=X-Remote-Extra- --requestheader-group-headers=X-Remote-Group --requestheader-username-headers=X-Remote-User --enable-aggregator-routing=true --audit-log-maxage=30 --audit-log-maxbackup=3 --audit-log-maxsize=100 --service-account-issuer=https://kubernetes.default.svc.cluster.local --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname --audit-log-path=/opt/kubernetes/logs/k8s-audit.log

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: I0721 12:21:30.688008 3463 storage_rbac.go:321] created rolebinding.rbac.authorization.k8s.io/system:controller:cloud-provider in kube-system

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: I0721 12:21:30.697775 3463 healthz.go:255] poststarthook/rbac/bootstrap-roles check failed: readyz

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: [-]poststarthook/rbac/bootstrap-roles failed: not finished

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: I0721 12:21:30.700700 3463 storage_rbac.go:321] created rolebinding.rbac.authorization.k8s.io/system:controller:token-cleaner in kube-system

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: I0721 12:21:30.722067 3463 storage_rbac.go:321] created rolebinding.rbac.authorization.k8s.io/system:controller:bootstrap-signer in kube-public

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: I0721 12:21:30.802037 3463 alloc.go:330] "allocated clusterIPs" service="default/kubernetes" clusterIPs={"IPv4":"10.0.0.1"}

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: W0721 12:21:30.806259 3463 lease.go:265] Resetting endpoints for master service "kubernetes" to [172.16.101.101]

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: I0721 12:21:30.806755 3463 controller.go:615] quota admission added evaluator for: endpoints

Jul 21 12:21:30 k8s-master-u2404-4-20-101 kube-apiserver[3463]: I0721 12:21:30.809043 3463 controller.go:615] quota admission added evaluator for: endpointslices.discovery.k8s.io

Jul 21 12:21:40 k8s-master-u2404-4-20-101 kube-apiserver[3463]: I0721 12:21:40.457088 3463 apf_controller.go:455] "Update CurrentCL" plName="exempt" seatDemandHighWatermark=3 seatDemandAvg=0.09249376344068766 seatDemandStdev=0.29905462070197086 seatDemandSmoothed=8.001123746013354 fairFrac=2.2631483338198577 currentCL=18 concurrencyDenominator=18 backstop=false报错记录

用错平台了

Jul 21 12:00:37 k8s-master-u2404-4-20-101 (piserver)[3273]: kube-apiserver.service: Failed to execute /opt/kubernetes/bin/kube-apiserver: Exec format error

root@k8s-master-u2404-4-20-101:~/kubernetes_install# systemctl status kube-apiserver

× kube-apiserver.service - Kubernetes API Server

Loaded: loaded (/usr/lib/systemd/system/kube-apiserver.service; enabled; preset: enabled)

Active: failed (Result: exit-code) since Mon 2025-07-21 12:00:38 CST; 8min ago

Duration: 4ms

Docs: https://github.com/kubernetes/kubernetes

Process: 3286 ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS (code=exited, status=203/EXEC)

Main PID: 3286 (code=exited, status=203/EXEC)

CPU: 1ms

Jul 21 12:00:38 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Scheduled restart job, restart counter is at 5.

Jul 21 12:00:38 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Start request repeated too quickly.

Jul 21 12:00:38 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Failed with result 'exit-code'.

Jul 21 12:00:38 k8s-master-u2404-4-20-101 systemd[1]: Failed to start kube-apiserver.service - Kubernetes API Server.

root@k8s-master-u2404-4-20-101:~/kubernetes_install# journalctl -u kube-apiserver

Jul 21 12:00:37 k8s-master-u2404-4-20-101 systemd[1]: Started kube-apiserver.service - Kubernetes API Server.

Jul 21 12:00:37 k8s-master-u2404-4-20-101 (piserver)[3273]: kube-apiserver.service: Failed to execute /opt/kubernetes/bin/kube-apiserver: Exec format error

Jul 21 12:00:37 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Main process exited, code=exited, status=203/EXEC

Jul 21 12:00:37 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Failed with result 'exit-code'.

Jul 21 12:00:37 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Scheduled restart job, restart counter is at 1.

Jul 21 12:00:37 k8s-master-u2404-4-20-101 systemd[1]: Started kube-apiserver.service - Kubernetes API Server.

Jul 21 12:00:37 k8s-master-u2404-4-20-101 (piserver)[3277]: kube-apiserver.service: Failed to execute /opt/kubernetes/bin/kube-apiserver: Exec format error

Jul 21 12:00:37 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Main process exited, code=exited, status=203/EXEC

Jul 21 12:00:37 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Failed with result 'exit-code'.

Jul 21 12:00:38 k8s-master-u2404-4-20-101 systemd[1]: kube-apiserver.service: Scheduled restart job, restart counter is at 2.

Jul 21 12:00:38 k8s-master-u2404-4-20-101 systemd[1]: Started kube-apiserver.service - Kubernetes API Server.

有隐藏字符

root@node1:~/kubernetes_install# journalctl -u kube-apiserver

Nov 12 15:47:31 node1 kube-apiserver[2793]: Error: "kube-apiserver" does not take any arguments, got ["\t"]

Nov 12 15:47:31 node1 kube-apiserver[2793]: Error: "kube-apiserver" does not take any arguments, got ["\t"]

Nov 12 15:47:31 node1 systemd[1]: kube-apiserver.service: Main process exited, code=exited, status=1/FAILURE

Nov 12 15:47:31 node1 systemd[1]: kube-apiserver.service: Failed with result 'exit-code'.

Nov 12 15:47:31 node1 systemd[1]: kube-apiserver.service: Scheduled restart job, restart counter is at 5.

Nov 12 15:47:31 node1 systemd[1]: kube-apiserver.service: Start request repeated too quickly.

Nov 12 15:47:31 node1 systemd[1]: kube-apiserver.service: Failed with result 'exit-code'.

Nov 12 15:47:31 node1 systemd[1]: Failed to start kube-apiserver.service - Kubernetes API Server.

root@node1:~/kubernetes_install# cat -A /opt/kubernetes/cfg/kube-apiserver.conf

KUBE_APISERVER_OPTS="--enable-admission-plugins=NamespaceLifecycle,NodeRestriction,LimitRanger,ServiceAccount,DefaultStorageClass,ResourceQuota \$

--v=2 \^I$ ##### 这里

--etcd-servers=https://172.16.101.151:2379,https://172.16.101.153:2379,https://172.16.101.149:2379 \$

--bind-address=172.16.101.151 \$

--secure-port=6443 \$

--advertise-address=172.16.101.151 \$

--allow-privileged=true \$

--service-cluster-ip-range=10.0.0.0/24 \$

--authorization-mode=RBAC,Node \$

--enable-bootstrap-token-auth=true \$

--token-auth-file=/opt/kubernetes/cfg/token.csv \$

--service-node-port-range=30000-32767 \$

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \$

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \$

--tls-cert-file=/opt/kubernetes/ssl/server.pem \$

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \$

--client-ca-file=/opt/kubernetes/ssl/ca.pem \$

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \$

--service-account-issuer=api \$

--service-account-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \$

--etcd-cafile=/opt/etcd/ssl/ca.pem \$

--etcd-certfile=/opt/etcd/ssl/server.pem \$

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \$

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \$

--proxy-client-cert-file=/opt/kubernetes/ssl/server.pem \$

--proxy-client-key-file=/opt/kubernetes/ssl/server-key.pem \$

--requestheader-allowed-names=kubernetes \$

--requestheader-extra-headers-prefix=X-Remote-Extra- \$

--requestheader-group-headers=X-Remote-Group \$

--requestheader-username-headers=X-Remote-User \$

--enable-aggregator-routing=true \$

--audit-log-maxage=30 \$

--audit-log-maxbackup=3 \$

--audit-log-maxsize=100 \$

--service-account-issuer=https://kubernetes.default.svc.cluster.local \$

--kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname \$

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"$

root@node1:~/kubernetes_install# systemctl status kube-apiserver.service

● kube-apiserver.service - Kubernetes API Server

Loaded: loaded (/usr/lib/systemd/system/kube-apiserver.service; enabled; preset: enabled)

Active: active (running) since Wed 2025-11-12 15:52:17 CST; 2s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 2877 (kube-apiserver)

Tasks: 8 (limit: 4549)

Memory: 173.2M (peak: 173.7M)

CPU: 1.120s

CGroup: /system.slice/kube-apiserver.service

└─2877 /opt/kubernetes/bin/kube-apiserver --enable-admission-plugins=NamespaceLifecycle,NodeRestrict>

Nov 12 15:52:19 node1 kube-apiserver[2877]: I1112 15:52:19.993576 2877 storage_rbac.go:321] created rolebindin>

Nov 12 15:52:20 node1 kube-apiserver[2877]: I1112 15:52:20.000410 2877 storage_rbac.go:321] created rolebindin>

Nov 12 15:52:20 node1 kube-apiserver[2877]: I1112 15:52:20.004727 2877 storage_rbac.go:321] created rolebindin>

Nov 12 15:52:20 node1 kube-apiserver[2877]: I1112 15:52:20.007932 2877 storage_rbac.go:321] created rolebindin>

Nov 12 15:52:20 node1 kube-apiserver[2877]: I1112 15:52:20.010385 2877 storage_rbac.go:321] created rolebindin>

Nov 12 15:52:20 node1 kube-apiserver[2877]: I1112 15:52:20.014325 2877 storage_rbac.go:321] created rolebindin>

Nov 12 15:52:20 node1 kube-apiserver[2877]: I1112 15:52:20.090338 2877 alloc.go:330] "allocated clusterIPs" se>

Nov 12 15:52:20 node1 kube-apiserver[2877]: W1112 15:52:20.094779 2877 lease.go:265] Resetting endpoints for m>

Nov 12 15:52:20 node1 kube-apiserver[2877]: I1112 15:52:20.095254 2877 controller.go:615] quota admission adde>

Nov 12 15:52:20 node1 kube-apiserver[2877]: I1112 15:52:20.097402 2877 controller.go:615] quota admission adde3、kube-controller-manager的配置

kube-controller-manager 是控制平面的组件, 负责运行控制器进程。

从逻辑上讲, 每个控制器都是一个单独的进程, 但是为了降低复杂性,它们都被编译到同一个可执行文件,并在同一个进程中运行。

这些控制器包括:

节点控制器(Node Controller):负责在节点出现故障时进行通知和响应

任务控制器(Job Controller):监测代表一次性任务的 Job 对象,然后创建 Pods 来运行这些任务直至完成

端点分片控制器(EndpointSlice controller):填充端点分片(EndpointSlice)对象(以提供 Service 和 Pod 之间的链接)。

服务账号控制器(ServiceAccount controller):为新的命名空间创建默认的服务账号(ServiceAccount)。

kube-controller-manager.conf

root@k8s-master-u2404-4-20-101:~/kubernetes_install# vim /opt/kubernetes/cfg/kube-controller-manager.conf

KUBE_CONTROLLER_MANAGER_OPTS=" \

--v=2 \

--leader-elect=true \

--kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \

--bind-address=127.0.0.1 \

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--cluster-signing-duration=87600h0m0s"

#--kubeconfig:连接apiserver配置文件

#--leader-elect:当该组件启动多个时,自动选举(HA)

#--cluster-cidr:集群中 Pod 的 CIDR 范围。要求 --allocate-node-cidrs 标志为 true。

#--service-cluster-ip-range:集群中 Service 对象的 CIDR 范围。要求 --allocate-node-cidrs 标志为 true。

#--cluster-signing-cert-file/--cluster-signing-key-file:自动为kubelet颁发证书的CA,与apiserver保持一致Kube-controller-manager的证书

root@k8s-master-u2404-4-20-101:~/TLS/k8s# vim kube-controller-manager-csr.json

{

"CN": "system:kube-controller-manager",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

root@k8s-master-u2404-4-20-101:~/TLS/k8s# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager

2025/07/21 14:08:26 [INFO] generate received request

2025/07/21 14:08:26 [INFO] received CSR

2025/07/21 14:08:26 [INFO] generating key: rsa-2048

2025/07/21 14:08:26 [INFO] encoded CSR

2025/07/21 14:08:26 [INFO] signed certificate with serial number 489687226898255732193791210185460969461463525513

2025/07/21 14:08:26 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

root@k8s-master-u2404-4-20-101:~/TLS/k8s# ls kube-controller-manager*

kube-controller-manager.csr kube-controller-manager-key.pem

kube-controller-manager-csr.json kube-controller-manager.pemkube-controller-manager.kubeconfig文件

通过kube-controller-manager-csr-kubeconfig 生成 kube-controller-manager.kubeconfig

--embed-certs:将证书和私钥直接嵌入 kubeconfig 文件。

root@k8s-master-u2404-4-20-101:~/TLS/k8s# cat kube-controller-manager-csr-kubeconfig

#设置环境变量

KUBE_CONFIG="/opt/kubernetes/cfg/kube-controller-manager.kubeconfig"

KUBE_APISERVER="https://172.16.101.101:6443"

#设置集群信息

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

#设置用户信息

kubectl config set-credentials kube-controller-manager \

--client-certificate=./kube-controller-manager.pem \

--client-key=./kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

#设置上下文

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-controller-manager \

--kubeconfig=${KUBE_CONFIG}

#使用默认上下文

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

root@k8s-master-u2404-4-20-101:~/TLS/k8s# source kube-controller-manager-csr-kubeconfig

Cluster "kubernetes" set.

User "kube-controller-manager" set.

Context "default" created.

Switched to context "default".

root@k8s-master-u2404-4-20-101:~/TLS/k8s# cat /opt/kubernetes/cfg/kube-controller-manager.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURvakNDQW9xZ0F3SUJBZ0lVUWZhNXRzVzJEWmFZSk1rSEZPRTdiclhWc2VRd0RRWUpLb1pJaHZjTkFRRUwKQlFBd2FURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEREQUtCZ05WQkFvVEEyMTVZekVRTUE0R0ExVUVDeE1IYjNCbGJuTnpiREVXTUJRR0ExVUVBeE1OCmEzVmlaWEp1WlhSbGN5QkRRVEFlRncweU5UQTNNakV3TXpFME1EQmFGdzB6TURBM01qQXdNekUwTURCYU1Ha3gKQ3pBSkJnTlZCQVlUQWtOT01SQXdEZ1lEVlFRSUV3ZENaV2xxYVc1bk1SQXdEZ1lEVlFRSEV3ZENaV2xxYVc1bgpNUXd3Q2dZRFZRUUtFd050ZVdNeEVEQU9CZ05WQkFzVEIyOXdaVzV6YzJ3eEZqQVVCZ05WQkFNVERXdDFZbVZ5CmJtVjBaWE1nUTBFd2dnRWlNQTBHQ1NxR1NJYjNEUUVCQVFVQUE0SUJEd0F3Z2dFS0FvSUJBUUNjWWNteHNQSTcKOWFRNFhqYi9NcjVUUjJ1NlROaTlmSWtxRGRYaG5kK25Ib2FTVWZzQ3hzMUFXc2k0bmJhWGRxZWZsc0J5TTd0TQpDUlpTeWpkNkIyTzlGSWdaNjQ0RXU4NEx4S2I0V0E5bWM1MkZudytsbXNIakN0cmUwS2VaUWxDZ013bGRqSTdkClptN2M1WG9NcmxXSHB6aCsraU1ha2p6YUd6ZkdRdEdlSjg4ZUxqSjQ1WGloMGluYm41MFVjTzNLYUs5b2gvVDIKNVdRN1BqR1dqV1VpWVFuVWJKdWJVV2xrQ0d0OTNHSUJwOG9lc2VoZzJCaDhrM1Q4ZjlVSmx1Z0FPR0x6NmtsNQpsWjVQL1ppazVscnVwdU5NUm9xblh4VU5JWUlrc2ExMU8wZ2xYN3lxUmN5YVNGTUQzZml5V2hNVms0dUUxbzJvClB0SFpTZzlEMkVTZkFnTUJBQUdqUWpCQU1BNEdBMVVkRHdFQi93UUVBd0lCQmpBUEJnTlZIUk1CQWY4RUJUQUQKQVFIL01CMEdBMVVkRGdRV0JCUkIvMGd3SGdpMGY1aHVORFkybFM4aGV2NnB4ekFOQmdrcWhraUc5dzBCQVFzRgpBQU9DQVFFQUt5UHJXVnZKQmFuVmM4ZGFtMktiMVBVOCtRamxGMGwyek9hN25BZTlGWHY1YXQ2MHg3SzB0bG9KCmVKbWtJZ08vbnVhWjI4ak9TWWUrZ1p5YVhtQWdsbkNDTWQ3b29pNlVoMUtGbXJaUmovS0FqUW5VZUh6L01WYkQKbWxaWFRkRzcrZEx2MjIyUHZMRndHN3BQdHNnNmtmUWtUUlgwNUZxa0NuZUtCMS9zRS9Qaks4OWhSMW9iRUxtQwowYTNab3VpbVF6THBKN0ZIRVJobWpmVENWNmMvMktlaEMxWmdidmlJMVp5dGtkQllMcW5JUjZSaUNmZTQ4aDlLCjZ3SC8wbWp5ZG5oKzhYbEtIRWV5SU52WTVJTkJscmRiNmJ2OENlNnBVRUZMdERjZlVDUDI0YzlqelpnR3BrZ24KeE55NGlpY1ZyK2tFNmpPTytGdDN6Q2wySEcwUEZ3PT0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://172.16.101.101:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kube-controller-manager

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kube-controller-manager

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQrekNDQXVPZ0F3SUJBZ0lVVmNaVnJqakp2WjdIWXArOXRmRHRlV0JhS0lrd0RRWUpLb1pJaHZjTkFRRUwKQlFBd2FURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEREQUtCZ05WQkFvVEEyMTVZekVRTUE0R0ExVUVDeE1IYjNCbGJuTnpiREVXTUJRR0ExVUVBeE1OCmEzVmlaWEp1WlhSbGN5QkRRVEFlRncweU5UQTNNakV3TmpBek1EQmFGdzB6TlRBM01Ua3dOakF6TURCYU1JR0UKTVFzd0NRWURWUVFHRXdKRFRqRVFNQTRHQTFVRUNCTUhRbVZwU21sdVp6RVFNQTRHQTFVRUJ4TUhRbVZwU21sdQpaekVYTUJVR0ExVUVDaE1PYzNsemRHVnRPbTFoYzNSbGNuTXhEekFOQmdOVkJBc1RCbE41YzNSbGJURW5NQ1VHCkExVUVBeE1lYzNsemRHVnRPbXQxWW1VdFkyOXVkSEp2Ykd4bGNpMXRZVzVoWjJWeU1JSUJJakFOQmdrcWhraUcKOXcwQkFRRUZBQU9DQVE4QU1JSUJDZ0tDQVFFQXZDZnJmR0xienUwTXJPeS9Cdkp5ZkRTWkRzOHE2L3h2S0tLZgpaV252ZTVJdTBUWnlmcWNWRnZ3bFlvd0g3VkZLaS9JQm1CNEdwS2pIOVRaK0dkNWJVVXh0VE5tN1k3bVFxaU1SCml3TGEvRXJlcndCUXVFdkxmMEE1K0FMdU00M1N1Qm50bVNvZm8wbXRBdGFjNzRZOWE5TG81cHBNRjY4SG1NdzMKUE0yLytCcnh6VnZxRHhLRjhjcmlOcldBbGprZDNBY0NPeTh3b1RqWldoUGlQT1RKNThYL0RyUDl6OHBkZUFqTAorOVA3U2Eydzk1ZUVQOTVjTStNUlNSeHZYeDJITWthNCsxZzU0dHVXZkdic3RUcHYxa3J4QThxTytTWDFCbnNGCmc0cmpIcmV0Wmk5d3JtYWtmOXhpejMxK3QrN2Jad0xta0lpQlRaVE02WVJrR1hWL3hRSURBUUFCbzM4d2ZUQU8KQmdOVkhROEJBZjhFQkFNQ0JhQXdIUVlEVlIwbEJCWXdGQVlJS3dZQkJRVUhBd0VHQ0NzR0FRVUZCd01DTUF3RwpBMVVkRXdFQi93UUNNQUF3SFFZRFZSME9CQllFRksrQXNIeVpPaWVvdlZmRDlXTFc5azJLQ3J1TE1COEdBMVVkCkl3UVlNQmFBRkVIL1NEQWVDTFIvbUc0ME5qYVZMeUY2L3FuSE1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQWgKZjR3bFlJdjNxNVZYdThaR0N3dVJtcE9udkE4NGdUY1Z6Nm5LeDFyeEphWHNKcUpMZEJycCsyZldZNDFXK3piMApVak9JOHBQOVhrZXFWN0lZYllWMWJYSUpyM3lneFludktTYSswR1ZyZnZjQWFnd29PVjRLNTg5aDZrQVVnUmx5ClhMYnBtdkNwZ3pKRXVmUk02ZS9Ca08vUkpFQ0ttSXp5WDRqVitWVFNrZzJCb3BFNVcrbHZzb2VlMWh5ZUF1SHYKcUtDSDhqUFlCeVpCNmwwZkhUc2ZmR2Z5UUFHZFJOTjB2MGlIeTdYWERGMnlVbXcxRDJOY25WSmlmRTJOclFqSApYVjdjTUpyQzlnSVp1dEVFZ3NFQzFXcHVYUEtHOTZBYS81dmdkdGgzRm9QMC9SdHNDN2QwWS9OQnBNbk5BY1RUCmt3ZnVmeGZIdHZkTzh3T09pejJ0Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcFFJQkFBS0NBUUVBdkNmcmZHTGJ6dTBNck95L0J2SnlmRFNaRHM4cTYveHZLS0tmWldudmU1SXUwVFp5CmZxY1ZGdndsWW93SDdWRktpL0lCbUI0R3BLakg5VForR2Q1YlVVeHRUTm03WTdtUXFpTVJpd0xhL0VyZXJ3QlEKdUV2TGYwQTUrQUx1TTQzU3VCbnRtU29mbzBtdEF0YWM3NFk5YTlMbzVwcE1GNjhIbU13M1BNMi8rQnJ4elZ2cQpEeEtGOGNyaU5yV0FsamtkM0FjQ095OHdvVGpaV2hQaVBPVEo1OFgvRHJQOXo4cGRlQWpMKzlQN1NhMnc5NWVFClA5NWNNK01SU1J4dlh4MkhNa2E0KzFnNTR0dVdmR2JzdFRwdjFrcnhBOHFPK1NYMUJuc0ZnNHJqSHJldFppOXcKcm1ha2Y5eGl6MzErdCs3Ylp3TG1rSWlCVFpUTTZZUmtHWFYveFFJREFRQUJBb0lCQVFDU3liSDFRRXFyakZPdgpOS056RUFJdzAvZjBqYnEya0NGSVdsWndEODA5WWpZVUVaNFJJTmhiTGlzY1RwS0Fta0xHR3U4VGRabEpMRU9UCkVnZ2V0bElYZ3NCaWpCcWRHays1NjlIcjJUWnVUUnFjL0duODNXVE15Wlp2M2hsbkx1V05xdXlwNlNyMWdLencKNGUxZEVDVXEwVWZSWDk2dE8yZDUxUmZpMzhFOEZMRnd2Wk93cWNxd2J2YlA5aTg3ZWJ2TXd4UzFrZVNVcWtMUwpLbGFnL1E4cXVhNGJ1WVRzQm5DTktMR053WEYzNWI4U25iOS9MSVZSUVE1YXYxckhRZWZIYy9VbWdRS3RCdmc0CkxwaEVzS2pSS0FWd3B5RHlCWGJKSDVjdnB0a3RzYTBlT1o4NkRWSGNqNm5zc0ZnaXdnVzFxTHh5V3BoZDBRaXAKZnJtMm1IU2hBb0dCQU1pNW1mWnNEZHE0UXdLR1AycnNLem81bDg2RzV0bksyK3lKL2dTVFp5aUxlU2V5RzVabgpwVklydmZTa1Z6cVY2Vk9XUTJ3bEtwamN3UlBKL3NFcVdRZ0pLMit4TEFyVytINXhuNkx2bVdrcUtmcTFFcEE5CkZ1OWlLQnRpWTh0Wk1uV1JUd1k3YUZDaGJHNzB0N2xTNjdkRTlsc20vc0ZTc0xlbE16S2QzTnhiQW9HQkFPLzQKUG15eUlkQWxRUVNXS01lcWtmVmpFaVRNemRZUVFBd0kzQVZDMlJRQXVpYWJLa2t0Tkh1alZCbkRUYytFTHg2Ngo4MDYzYkNqdk1kcS9xSHp4bkJXdmVoT2FHQndSenFPUUF3S0pEeWRFNWo4Z1pBZHJ5elBmdkUwY3krRmtjeGs2Cm9FSWlWbG9SZGlENTdNMUZLQnhkR1kxbVRGNjRvWG9kdExlYTFFNWZBb0dCQUl0SGxsalNRNDdJR3Q5T2pnVEEKV1lKdVlqTVJrbS8vZmprVXkya2JheEpNTFVacEpSRnBXK0szclhocTdJZ2ZhNmJ2ZGxzOU11Q2RGWENJMGpmeApEWlF3NEs0QTcwR2FSeFZkL0ZwUURWQld6SWhGU3R0Qk9IL2t5Vld2SVBZQ0w2dzZwdTM1SFBvTitMTEpKZzczClJjNkdrTGRSU0thV25UN2c1N1N3cTRkUEFvR0FOVUx5Q0FvWmV5dHBuT3ovTENIdHQzcy93YTg3V0hITzVWenEKQ0xqbm1ZcjN4aTNXV3R1UHRJbHgxeTRFRFRVWGlFaVNURHhsNDBnRDFydUhXQVFBVXNmWjNwUHJHZi9SejNmZApVeWk4bGtpeW1meEVkMmt6ZHRZSDQwMnE2dUh5c2Z6VEtScVo4Ky9BT2wxK2M2a1AyQXZKNmhwMGhPbVIzWnJPClM1b3YyUjBDZ1lFQXRaTnV1TW8zOVZSMUpQMW1jTGtJTkV5Y0E2UWtHNnFDWGgyS1lTWW5JbWdoKzlhS1RZc0IKN2s4eG9EM1prcyszWXdIdDgyWkNxaklLU0tDUGhhS1VrSXMwTi9SUndzNHhwRStqbGwwMGx0SnoxampMbDRMdgpQZjduOHI4WWlKZzRVZmpyMlFrRCtUWWloQ08vK0lGTlFNZkQzMTZ0V1htclBITExBM0Q0WEpFPQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=kube-controller-manager.service

root@k8s-master-u2404-4-20-101:~# vim /usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

root@k8s-master-u2404-4-20-101:~# systemctl daemon-reload

root@k8s-master-u2404-4-20-101:~# systemctl enable --now kube-controller-manager

Created symlink /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service → /usr/lib/systemd/system/kube-controller-manager.service.

root@k8s-master-u2404-4-20-101:~# systemctl status kube-controller-manager.service

● kube-controller-manager.service - Kubernetes Controller Manager

Loaded: loaded (/usr/lib/systemd/system/kube-controller-manager.service; enabled; preset: enabled)

Active: active (running) since Mon 2025-07-21 14:20:33 CST; 9s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: 4034 (kube-controller)

Tasks: 6 (limit: 4550)

Memory: 19.4M (peak: 19.9M)

CPU: 489ms

CGroup: /system.slice/kube-controller-manager.service

└─4034 /opt/kubernetes/bin/kube-controller-manager --v=2 --leader-elect=true --kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig --bind-address=127.0.0.1 --allocate-node-cidrs=true --cluster-cidr=10.244.0.0/16 --service-cluster-ip-range=10.0.0.0/24 --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem --root-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem --cluster-signing-duration=87600h0m0s

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423833 4034 cleaner.go:83] "Starting CSR cleaner controller" logger="certificatesigningrequest-cleaner-controller"

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423873 4034 pv_controller_base.go:313] "Starting persistent volume controller" logger="persistentvolume-binder-controller"

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423876 4034 shared_informer.go:313] Waiting for caches to sync for persistent volume

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423884 4034 publisher.go:102] "Starting root CA cert publisher controller" logger="root-ca-certificate-publisher-controller"

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423886 4034 shared_informer.go:313] Waiting for caches to sync for crt configmap

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423903 4034 endpointslice_controller.go:265] "Starting endpoint slice controller" logger="endpointslice-controller"

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423906 4034 shared_informer.go:313] Waiting for caches to sync for endpoint_slice

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423936 4034 reflector.go:359] Caches populated for *v1.ServiceAccount from k8s.io/client-go/informers/factory.go:160

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.423960 4034 reflector.go:359] Caches populated for *v1.Secret from k8s.io/client-go/informers/factory.go:160

Jul 21 14:20:34 k8s-master-u2404-4-20-101 kube-controller-manager[4034]: I0721 14:20:34.523220 4034 shared_informer.go:320] Caches are synced for tokens4、kube-scheduler的配置

kube-scheduler:调度器,负责将Pod基于一定算法,将其调用到更合适的节点(服务器)上

kube-scheduler.conf

root@k8s-master-u2404-4-20-101:~# vim /opt/kubernetes/cfg/kube-scheduler.conf

KUBE_SCHEDULER_OPTS=" \

--v=2 \

--leader-elect \

--kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \

--bind-address=127.0.0.1"

#--kubeconfig:连接apiserver配置文件

#--leader-elect:当该组件启动多个时,自动选举(HA)kube-scheduler的证书

root@k8s-master-u2404-4-20-101:~# cd ~/TLS/k8s/

root@k8s-master-u2404-4-20-101:~/TLS/k8s# vim kube-scheduler-csr.json

{

"CN": "system:kube-scheduler",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

root@k8s-master-u2404-4-20-101:~/TLS/k8s# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler

2025/07/21 14:24:40 [INFO] generate received request

2025/07/21 14:24:40 [INFO] received CSR

2025/07/21 14:24:40 [INFO] generating key: rsa-2048

2025/07/21 14:24:40 [INFO] encoded CSR

2025/07/21 14:24:40 [INFO] signed certificate with serial number 28432852376660775985891292666302637085398613842

2025/07/21 14:24:40 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

root@k8s-master-u2404-4-20-101:~/TLS/k8s# ls kube-scheduler*

kube-scheduler.csr kube-scheduler-csr.json kube-scheduler-key.pem kube-scheduler.pemkube-scheduler.kubeconfig文件

root@k8s-master-u2404-4-20-101:~/TLS/k8s# cat kube-scheduler-kubeconfig

KUBE_CONFIG="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"

KUBE_APISERVER="https://172.16.101.101:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-credentials kube-scheduler \

--client-certificate=./kube-scheduler.pem \

--client-key=./kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=${KUBE_CONFIG}

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-scheduler \

--kubeconfig=${KUBE_CONFIG}

kubectl config use-context default --kubeconfig=${KUBE_CONFIG}

root@k8s-master-u2404-4-20-101:~/TLS/k8s# source kube-scheduler-kubeconfig

Cluster "kubernetes" set.

User "kube-scheduler" set.

Context "default" modified.

Switched to context "default".

root@k8s-master-u2404-4-20-101:~/TLS/k8s# cat /opt/kubernetes/cfg/kube-scheduler.kubeconfig

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURvakNDQW9xZ0F3SUJBZ0lVUWZhNXRzVzJEWmFZSk1rSEZPRTdiclhWc2VRd0RRWUpLb1pJaHZjTkFRRUwKQlFBd2FURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEREQUtCZ05WQkFvVEEyMTVZekVRTUE0R0ExVUVDeE1IYjNCbGJuTnpiREVXTUJRR0ExVUVBeE1OCmEzVmlaWEp1WlhSbGN5QkRRVEFlRncweU5UQTNNakV3TXpFME1EQmFGdzB6TURBM01qQXdNekUwTURCYU1Ha3gKQ3pBSkJnTlZCQVlUQWtOT01SQXdEZ1lEVlFRSUV3ZENaV2xxYVc1bk1SQXdEZ1lEVlFRSEV3ZENaV2xxYVc1bgpNUXd3Q2dZRFZRUUtFd050ZVdNeEVEQU9CZ05WQkFzVEIyOXdaVzV6YzJ3eEZqQVVCZ05WQkFNVERXdDFZbVZ5CmJtVjBaWE1nUTBFd2dnRWlNQTBHQ1NxR1NJYjNEUUVCQVFVQUE0SUJEd0F3Z2dFS0FvSUJBUUNjWWNteHNQSTcKOWFRNFhqYi9NcjVUUjJ1NlROaTlmSWtxRGRYaG5kK25Ib2FTVWZzQ3hzMUFXc2k0bmJhWGRxZWZsc0J5TTd0TQpDUlpTeWpkNkIyTzlGSWdaNjQ0RXU4NEx4S2I0V0E5bWM1MkZudytsbXNIakN0cmUwS2VaUWxDZ013bGRqSTdkClptN2M1WG9NcmxXSHB6aCsraU1ha2p6YUd6ZkdRdEdlSjg4ZUxqSjQ1WGloMGluYm41MFVjTzNLYUs5b2gvVDIKNVdRN1BqR1dqV1VpWVFuVWJKdWJVV2xrQ0d0OTNHSUJwOG9lc2VoZzJCaDhrM1Q4ZjlVSmx1Z0FPR0x6NmtsNQpsWjVQL1ppazVscnVwdU5NUm9xblh4VU5JWUlrc2ExMU8wZ2xYN3lxUmN5YVNGTUQzZml5V2hNVms0dUUxbzJvClB0SFpTZzlEMkVTZkFnTUJBQUdqUWpCQU1BNEdBMVVkRHdFQi93UUVBd0lCQmpBUEJnTlZIUk1CQWY4RUJUQUQKQVFIL01CMEdBMVVkRGdRV0JCUkIvMGd3SGdpMGY1aHVORFkybFM4aGV2NnB4ekFOQmdrcWhraUc5dzBCQVFzRgpBQU9DQVFFQUt5UHJXVnZKQmFuVmM4ZGFtMktiMVBVOCtRamxGMGwyek9hN25BZTlGWHY1YXQ2MHg3SzB0bG9KCmVKbWtJZ08vbnVhWjI4ak9TWWUrZ1p5YVhtQWdsbkNDTWQ3b29pNlVoMUtGbXJaUmovS0FqUW5VZUh6L01WYkQKbWxaWFRkRzcrZEx2MjIyUHZMRndHN3BQdHNnNmtmUWtUUlgwNUZxa0NuZUtCMS9zRS9Qaks4OWhSMW9iRUxtQwowYTNab3VpbVF6THBKN0ZIRVJobWpmVENWNmMvMktlaEMxWmdidmlJMVp5dGtkQllMcW5JUjZSaUNmZTQ4aDlLCjZ3SC8wbWp5ZG5oKzhYbEtIRWV5SU52WTVJTkJscmRiNmJ2OENlNnBVRUZMdERjZlVDUDI0YzlqelpnR3BrZ24KeE55NGlpY1ZyK2tFNmpPTytGdDN6Q2wySEcwUEZ3PT0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

server: https://172.16.101.101:6443

name: kubernetes

contexts:

- context:

cluster: kubernetes

user: kube-scheduler

name: default

current-context: default

kind: Config

preferences: {}

users:

- name: kube-scheduler

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUQ4VENDQXRtZ0F3SUJBZ0lVQlByNUkxM0hSd3FMUnkycFZTUVV2bzZ6UDFJd0RRWUpLb1pJaHZjTkFRRUwKQlFBd2FURUxNQWtHQTFVRUJoTUNRMDR4RURBT0JnTlZCQWdUQjBKbGFXcHBibWN4RURBT0JnTlZCQWNUQjBKbAphV3BwYm1jeEREQUtCZ05WQkFvVEEyMTVZekVRTUE0R0ExVUVDeE1IYjNCbGJuTnpiREVXTUJRR0ExVUVBeE1OCmEzVmlaWEp1WlhSbGN5QkRRVEFlRncweU5UQTNNakV3TmpJd01EQmFGdzB6TlRBM01Ua3dOakl3TURCYU1Ic3gKQ3pBSkJnTlZCQVlUQWtOT01SQXdEZ1lEVlFRSUV3ZENaV2xLYVc1bk1SQXdEZ1lEVlFRSEV3ZENaV2xLYVc1bgpNUmN3RlFZRFZRUUtFdzV6ZVhOMFpXMDZiV0Z6ZEdWeWN6RVBNQTBHQTFVRUN4TUdVM2x6ZEdWdE1SNHdIQVlEClZRUURFeFZ6ZVhOMFpXMDZhM1ZpWlMxelkyaGxaSFZzWlhJd2dnRWlNQTBHQ1NxR1NJYjNEUUVCQVFVQUE0SUIKRHdBd2dnRUtBb0lCQVFEck9WczRhK0hIbmtRKzhiSzRHK1loODdleTMyaXZzRzE4b3RqVEVWaXhlSUVqMXV3OAoweGh4MWNsdnF4S0NQbGxiY1VLdVVxTTBQbk1DSlltYi8rTlpzajRUNSt5bk5oNUZuZm5WQmpSTUdFQXY5M3EwClcrTDJ2dWFURWRkUzVRYzVtcnY2TmpyMjV1c3JzVGRGd3cxYm5oZ1Nna3pRMzA1V2w3SFVVVkllakhiWW5JMEEKNVlSRWc4bXZibVBLSmd1TWdnWUVYMFIwV2IraWJ0OVZHbHRkclZrQ3ZlMmZNS0QvRlh0M0orVHhkNzRqM3FqSQovdVpzWE9NdE1EZ21KSzVwbUdVTGV1ZDV4MVdCdE11eHBUYU5lcWx0cTVVSW9KdlNSbXRoYmJ6OFlzdGFFd3ZOClpkb29OQWlGYittSDR3MHRLeTQrbW1sR3pGUEpKYStqNFQxdEFnTUJBQUdqZnpCOU1BNEdBMVVkRHdFQi93UUUKQXdJRm9EQWRCZ05WSFNVRUZqQVVCZ2dyQmdFRkJRY0RBUVlJS3dZQkJRVUhBd0l3REFZRFZSMFRBUUgvQkFJdwpBREFkQmdOVkhRNEVGZ1FVT2pHcFVZbmJsVmwwTS9VSlB3MDdrUGxrZjJRd0h3WURWUjBqQkJnd0ZvQVVRZjlJCk1CNEl0SCtZYmpRMk5wVXZJWHIrcWNjd0RRWUpLb1pJaHZjTkFRRUxCUUFEZ2dFQkFKVkFSZ093NExzMWtHeW4KSkRUMkN1b2llZmlVeVpRdmNLVm0yMGZCbE1OYVVnVGRGU20wL1djeWVIaW5taXdnSDA5U212WjlpZWhPRjk0Mwp2TC9UclZoUklFL2toM0Jjbmc2bWx4TUhwZVRDYnNLa2pGZVR5SHhCdGV6czBhc2s0djJLY1c5VVcrUmVHbHQ5CjA4aHIySGM3bGVFZEJJSWUyOWZRbFgwa2FxY1lZaXFSbHF4UWtQeGRNM0hqT0JReklicTJ4d1hHSW1sOWdqNmIKTGNDUkhsTDNndTgxUEFZK3FCbjd3TzFUNXRXRTYzaTRmMThwTU05VjR2Yjl0R0V1TlVvMXgvNjh6eFFMRWpUQgpvU2hkUU1Wc1hWQUo4cXNKQTlzeitHWk50ZGRMMTJMd1EySWU1d1VPYjNsN0tUVTgyRG5BRjJXOUM5QlFWUHluCk1PNDNnU0U9Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFcFFJQkFBS0NBUUVBNnpsYk9Hdmh4NTVFUHZHeXVCdm1JZk8zc3Q5b3I3QnRmS0xZMHhGWXNYaUJJOWJzClBOTVljZFhKYjZzU2dqNVpXM0ZDcmxLak5ENXpBaVdKbS8valdiSStFK2ZzcHpZZVJaMzUxUVkwVEJoQUwvZDYKdEZ2aTlyN21reEhYVXVVSE9acTcralk2OXVicks3RTNSY01OVzU0WUVvSk0wTjlPVnBleDFGRlNIb3gyMkp5TgpBT1dFUklQSnIyNWp5aVlMaklJR0JGOUVkRm0vb203ZlZScGJYYTFaQXIzdG56Q2cveFY3ZHlmazhYZStJOTZvCnlQN21iRnpqTFRBNEppU3VhWmhsQzNybmVjZFZnYlRMc2FVMmpYcXBiYXVWQ0tDYjBrWnJZVzI4L0dMTFdoTUwKeldYYUtEUUloVy9waCtNTkxTc3VQcHBwUnN4VHlTV3ZvK0U5YlFJREFRQUJBb0lCQUEzUmZzUmZ3aEhDQUd4YQpNbytTUkFDMm1wSU5nYzdnWkc0dit1RGJZZ1I2K2Nzck14R1hyUlh5NHpTR0xqNHNmMzladGZzYnE2N0VCR21aCjN1MmxLS3Y2UnA5UXZweE1GNWNyWXFQYkMzTjA4VUJnSDNzODhxWmdMSmR6TXQwUnkwemRCREg4d1pZRGxza28KVGdEeEpuVzlZZGlrZ3ZLNlM1WFdyNEd6alVseUJpcVZNTFFBVC9PU0RVWHlJSUR5QlQ0VjRsbHRRbnBaSE05OQpqWGVXdXl6TVBpazRDa2I1ak93UkNNVk05K0tKd0hkOERyLzZxWjRLUGxTcTgxZm8vcENXbjRLRDVYSDk5dXVQCnpMcVhPSmdFcVhwQUxrWlc5aWxGZDl6M0VNdjhKVE5HVm5sV2VjQ3F5TTZMeGNlYmxlMWxjME56Y3A1clNubWcKNEFVWDhFRUNnWUVBOEF4M25uZjNrSlA5OWpVd1huV1J2TUZWaWhFOWswdTFNQ2dCK3A0TzU2K2xNUE1YRXB5cgpjeGVqZXVsTWgvVlVFWGZGNVRYSkdNM0x3QkJhK0hjcTlKamhPQVRsL1Vlc3FINVRWb01mZ0lvVVl2WDhLeWhiCjZhZ1lvSWtXSTQ3TCtLcUFEbXB4d3dkZURiRzR2QzdaZUFESFRtTURGQlNkbG5XQ1cyUFdoQ2tDZ1lFQSt0clEKekdrMkNMV1RkN2QweUJYR1RHU1EzRWMwbFIva2xVb2dmc1ZrNXI2eHByTlliVHBpNENIVVcxeXJScTBKUnJqVQpJSWZQOWtSSEsvWlFodWhGT3hzK0JLZXJJRlF3S0xqK091aE8rWHoveHBzM0tJdDVNVWpzYTVqNmhqVkY0MWtICnhPTjlJS0NaeFlzeTkyVnlpbFlPYy9JRXhzYWFJalBsdG9lWGQ2VUNnWUVBN3JuM1hFbkNrcTRiS3ZmS21xWWgKd2E0ais2TVpzWnJoSG5zclBLcGorRlhkMnNobWNjUU5YZkJzVEpnbjNDNUc1UGhRZnByMjJ3d1BUWHIyZlpORgp6T3NkVURETzZReVcwUnFRbHNEZ1cxejIyVlA0N0pLK2xhanVsUGpBWTZ4bmZXMVMzUU5QRDc0TDgyS0RiZUxKCnMyWlN6OG40RGNoUzBJY2NsUGE5SjhFQ2dZRUF0MzNMY1ZvY1BpNmpXZFNGeGIyM3VUVnVpTkpFOGpmTCtpK28KcVZJMlJscUNsQTlueFM0S0dTeGxxeGFUNmpTMExsa1FRV05XaVNyVWJLSFZzWGpBKzBVb0RqdWUveHpWeFZQYwpFcmJPM2N2RFJFRlJEWVZIOXZjQ2lJbno1cXVkSFhtSUowckh3ay8zYXZveEk2bS9LTlZkNlEzRTFLbDlJVHVZCjhmVW9wRWtDZ1lFQTdyci83RjhtQ3NINHJqbWp4Ukt4TnliZHJPSGNNcTBrNVQ2UkpEU2JLa1lPd1ZKVHZjR2oKQ0xsa0RkNVBsVVphQThJV3hJUFR3VEhETThma2tCRjZyZlBVQVNCcE1qZFZsNlpoaGJmb2x1bWV0OWFlVk9yRwo4QXFHMURISVBsRFVtZ2xjYnZCa0VoS0hyRzA2TEcxeUs1eS93NnF6a1hERkplOVVHVkJRU1VJPQotLS0tLUVORCBSU0EgUFJJVkFURSBLRVktLS0tLQo=kube-scheduler.service

root@k8s-master-u2404-4-20-101:~/TLS/k8s# vim /usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

root@k8s-master-u2404-4-20-101:~/TLS/k8s# systemctl daemon-reload

root@k8s-master-u2404-4-20-101:~/TLS/k8s# systemctl enable --now kube-scheduler

Created symlink /etc/systemd/system/multi-user.target.wants/kube-scheduler.service → /usr/lib/systemd/system/kube-scheduler.service.报错记录

kube-scheduler-kubeconfig 少写了最后一行

root@k8s-master-u2404-4-20-101:~/TLS/k8s# systemctl status kube-scheduler

× kube-scheduler.service - Kubernetes Scheduler

Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; preset: enabled)

Active: failed (Result: exit-code) since Mon 2025-07-21 14:30:19 CST; 31s ago

Duration: 145ms

Docs: https://github.com/kubernetes/kubernetes

Process: 4281 ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS (code=exited, status=1/FAILURE)

Main PID: 4281 (code=exited, status=1/FAILURE)

CPU: 141ms

Jul 21 14:30:19 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Scheduled restart job, restart counter is at 5.

Jul 21 14:30:19 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Start request repeated too quickly.

Jul 21 14:30:19 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Failed with result 'exit-code'.

Jul 21 14:30:19 k8s-master-u2404-4-20-101 systemd[1]: Failed to start kube-scheduler.service - Kubernetes Scheduler.

root@k8s-master-u2404-4-20-101:~/TLS/k8s# journalctl -u kube-scheduler

Jul 21 14:30:16 k8s-master-u2404-4-20-101 systemd[1]: Started kube-scheduler.service - Kubernetes Scheduler.

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788026 4246 flags.go:64] FLAG: --allow-metric-labels="[]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788073 4246 flags.go:64] FLAG: --allow-metric-labels-manifest=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788076 4246 flags.go:64] FLAG: --authentication-kubeconfig=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788078 4246 flags.go:64] FLAG: --authentication-skip-lookup="false"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788080 4246 flags.go:64] FLAG: --authentication-token-webhook-cache-ttl="10s"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788082 4246 flags.go:64] FLAG: --authentication-tolerate-lookup-failure="true"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788083 4246 flags.go:64] FLAG: --authorization-always-allow-paths="[/healthz,/readyz,/livez]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788089 4246 flags.go:64] FLAG: --authorization-kubeconfig=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788090 4246 flags.go:64] FLAG: --authorization-webhook-cache-authorized-ttl="10s"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788092 4246 flags.go:64] FLAG: --authorization-webhook-cache-unauthorized-ttl="10s"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788093 4246 flags.go:64] FLAG: --bind-address="127.0.0.1"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788097 4246 flags.go:64] FLAG: --cert-dir=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788098 4246 flags.go:64] FLAG: --client-ca-file=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788099 4246 flags.go:64] FLAG: --config=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788100 4246 flags.go:64] FLAG: --contention-profiling="true"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788101 4246 flags.go:64] FLAG: --disabled-metrics="[]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788103 4246 flags.go:64] FLAG: --feature-gates=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788105 4246 flags.go:64] FLAG: --help="false"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788106 4246 flags.go:64] FLAG: --http2-max-streams-per-connection="0"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788107 4246 flags.go:64] FLAG: --kube-api-burst="100"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788109 4246 flags.go:64] FLAG: --kube-api-content-type="application/vnd.kubernetes.protobuf"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788110 4246 flags.go:64] FLAG: --kube-api-qps="50"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788112 4246 flags.go:64] FLAG: --kubeconfig="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788113 4246 flags.go:64] FLAG: --leader-elect="true"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788114 4246 flags.go:64] FLAG: --leader-elect-lease-duration="15s"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788115 4246 flags.go:64] FLAG: --leader-elect-renew-deadline="10s"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788115 4246 flags.go:64] FLAG: --leader-elect-resource-lock="leases"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788117 4246 flags.go:64] FLAG: --leader-elect-resource-name="kube-scheduler"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788118 4246 flags.go:64] FLAG: --leader-elect-resource-namespace="kube-system"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788119 4246 flags.go:64] FLAG: --leader-elect-retry-period="2s"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788123 4246 flags.go:64] FLAG: --log-flush-frequency="5s"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788124 4246 flags.go:64] FLAG: --log-json-info-buffer-size="0"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788129 4246 flags.go:64] FLAG: --log-json-split-stream="false"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788131 4246 flags.go:64] FLAG: --log-text-info-buffer-size="0"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788132 4246 flags.go:64] FLAG: --log-text-split-stream="false"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788133 4246 flags.go:64] FLAG: --logging-format="text"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788134 4246 flags.go:64] FLAG: --master=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788135 4246 flags.go:64] FLAG: --permit-address-sharing="false"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788136 4246 flags.go:64] FLAG: --permit-port-sharing="false"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788139 4246 flags.go:64] FLAG: --pod-max-in-unschedulable-pods-duration="5m0s"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788141 4246 flags.go:64] FLAG: --profiling="true"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788142 4246 flags.go:64] FLAG: --requestheader-allowed-names="[]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788144 4246 flags.go:64] FLAG: --requestheader-client-ca-file=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788145 4246 flags.go:64] FLAG: --requestheader-extra-headers-prefix="[x-remote-extra-]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788147 4246 flags.go:64] FLAG: --requestheader-group-headers="[x-remote-group]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788148 4246 flags.go:64] FLAG: --requestheader-username-headers="[x-remote-user]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788150 4246 flags.go:64] FLAG: --secure-port="10259"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788151 4246 flags.go:64] FLAG: --show-hidden-metrics-for-version=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788152 4246 flags.go:64] FLAG: --tls-cert-file=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788153 4246 flags.go:64] FLAG: --tls-cipher-suites="[]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788154 4246 flags.go:64] FLAG: --tls-min-version=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788155 4246 flags.go:64] FLAG: --tls-private-key-file=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788156 4246 flags.go:64] FLAG: --tls-sni-cert-key="[]"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788159 4246 flags.go:64] FLAG: --v="2"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788161 4246 flags.go:64] FLAG: --version="false"

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788163 4246 flags.go:64] FLAG: --vmodule=""

Jul 21 14:30:16 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:16.788165 4246 flags.go:64] FLAG: --write-config-to=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4246]: I0721 14:30:17.113042 4246 serving.go:380] Generated self-signed cert in-memory

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4246]: E0721 14:30:17.113465 4246 run.go:74] "command failed" err="invalid configuration: no configuration has been provided, try setting KUBERNETES_MASTER environment variable"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Main process exited, code=exited, status=1/FAILURE

Jul 21 14:30:17 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Failed with result 'exit-code'.

Jul 21 14:30:17 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Scheduled restart job, restart counter is at 1.

Jul 21 14:30:17 k8s-master-u2404-4-20-101 systemd[1]: Started kube-scheduler.service - Kubernetes Scheduler.

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415008 4255 flags.go:64] FLAG: --allow-metric-labels="[]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415065 4255 flags.go:64] FLAG: --allow-metric-labels-manifest=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415068 4255 flags.go:64] FLAG: --authentication-kubeconfig=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415070 4255 flags.go:64] FLAG: --authentication-skip-lookup="false"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415072 4255 flags.go:64] FLAG: --authentication-token-webhook-cache-ttl="10s"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415075 4255 flags.go:64] FLAG: --authentication-tolerate-lookup-failure="true"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415076 4255 flags.go:64] FLAG: --authorization-always-allow-paths="[/healthz,/readyz,/livez]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415081 4255 flags.go:64] FLAG: --authorization-kubeconfig=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415082 4255 flags.go:64] FLAG: --authorization-webhook-cache-authorized-ttl="10s"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415084 4255 flags.go:64] FLAG: --authorization-webhook-cache-unauthorized-ttl="10s"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415085 4255 flags.go:64] FLAG: --bind-address="127.0.0.1"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415088 4255 flags.go:64] FLAG: --cert-dir=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415089 4255 flags.go:64] FLAG: --client-ca-file=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415093 4255 flags.go:64] FLAG: --config=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415095 4255 flags.go:64] FLAG: --contention-profiling="true"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415097 4255 flags.go:64] FLAG: --disabled-metrics="[]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415100 4255 flags.go:64] FLAG: --feature-gates=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415105 4255 flags.go:64] FLAG: --help="false"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415106 4255 flags.go:64] FLAG: --http2-max-streams-per-connection="0"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415108 4255 flags.go:64] FLAG: --kube-api-burst="100"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415111 4255 flags.go:64] FLAG: --kube-api-content-type="application/vnd.kubernetes.protobuf"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415112 4255 flags.go:64] FLAG: --kube-api-qps="50"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415114 4255 flags.go:64] FLAG: --kubeconfig="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415116 4255 flags.go:64] FLAG: --leader-elect="true"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415117 4255 flags.go:64] FLAG: --leader-elect-lease-duration="15s"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415119 4255 flags.go:64] FLAG: --leader-elect-renew-deadline="10s"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415120 4255 flags.go:64] FLAG: --leader-elect-resource-lock="leases"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415121 4255 flags.go:64] FLAG: --leader-elect-resource-name="kube-scheduler"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415124 4255 flags.go:64] FLAG: --leader-elect-resource-namespace="kube-system"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415125 4255 flags.go:64] FLAG: --leader-elect-retry-period="2s"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415127 4255 flags.go:64] FLAG: --log-flush-frequency="5s"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415129 4255 flags.go:64] FLAG: --log-json-info-buffer-size="0"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415132 4255 flags.go:64] FLAG: --log-json-split-stream="false"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415134 4255 flags.go:64] FLAG: --log-text-info-buffer-size="0"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415135 4255 flags.go:64] FLAG: --log-text-split-stream="false"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415137 4255 flags.go:64] FLAG: --logging-format="text"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415138 4255 flags.go:64] FLAG: --master=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415139 4255 flags.go:64] FLAG: --permit-address-sharing="false"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415140 4255 flags.go:64] FLAG: --permit-port-sharing="false"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415142 4255 flags.go:64] FLAG: --pod-max-in-unschedulable-pods-duration="5m0s"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415143 4255 flags.go:64] FLAG: --profiling="true"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415144 4255 flags.go:64] FLAG: --requestheader-allowed-names="[]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415147 4255 flags.go:64] FLAG: --requestheader-client-ca-file=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415148 4255 flags.go:64] FLAG: --requestheader-extra-headers-prefix="[x-remote-extra-]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415151 4255 flags.go:64] FLAG: --requestheader-group-headers="[x-remote-group]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415154 4255 flags.go:64] FLAG: --requestheader-username-headers="[x-remote-user]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415156 4255 flags.go:64] FLAG: --secure-port="10259"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415159 4255 flags.go:64] FLAG: --show-hidden-metrics-for-version=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415160 4255 flags.go:64] FLAG: --tls-cert-file=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415163 4255 flags.go:64] FLAG: --tls-cipher-suites="[]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415165 4255 flags.go:64] FLAG: --tls-min-version=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415166 4255 flags.go:64] FLAG: --tls-private-key-file=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415167 4255 flags.go:64] FLAG: --tls-sni-cert-key="[]"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415170 4255 flags.go:64] FLAG: --v="2"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415172 4255 flags.go:64] FLAG: --version="false"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415174 4255 flags.go:64] FLAG: --vmodule=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.415177 4255 flags.go:64] FLAG: --write-config-to=""

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: I0721 14:30:17.800461 4255 serving.go:380] Generated self-signed cert in-memory

Jul 21 14:30:17 k8s-master-u2404-4-20-101 kube-scheduler[4255]: E0721 14:30:17.800855 4255 run.go:74] "command failed" err="invalid configuration: no configuration has been provided, try setting KUBERNETES_MASTER environment variable"

Jul 21 14:30:17 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Main process exited, code=exited, status=1/FAILURE

Jul 21 14:30:17 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Failed with result 'exit-code'.

Jul 21 14:30:18 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Scheduled restart job, restart counter is at 2.

Jul 21 14:30:18 k8s-master-u2404-4-20-101 systemd[1]: Started kube-scheduler.service - Kubernetes Scheduler.

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159211 4264 flags.go:64] FLAG: --allow-metric-labels="[]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159270 4264 flags.go:64] FLAG: --allow-metric-labels-manifest=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159275 4264 flags.go:64] FLAG: --authentication-kubeconfig=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159277 4264 flags.go:64] FLAG: --authentication-skip-lookup="false"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159280 4264 flags.go:64] FLAG: --authentication-token-webhook-cache-ttl="10s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159283 4264 flags.go:64] FLAG: --authentication-tolerate-lookup-failure="true"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159285 4264 flags.go:64] FLAG: --authorization-always-allow-paths="[/healthz,/readyz,/livez]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159291 4264 flags.go:64] FLAG: --authorization-kubeconfig=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159293 4264 flags.go:64] FLAG: --authorization-webhook-cache-authorized-ttl="10s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159296 4264 flags.go:64] FLAG: --authorization-webhook-cache-unauthorized-ttl="10s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159298 4264 flags.go:64] FLAG: --bind-address="127.0.0.1"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159301 4264 flags.go:64] FLAG: --cert-dir=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159304 4264 flags.go:64] FLAG: --client-ca-file=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159307 4264 flags.go:64] FLAG: --config=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159309 4264 flags.go:64] FLAG: --contention-profiling="true"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159312 4264 flags.go:64] FLAG: --disabled-metrics="[]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159315 4264 flags.go:64] FLAG: --feature-gates=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159326 4264 flags.go:64] FLAG: --help="false"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159328 4264 flags.go:64] FLAG: --http2-max-streams-per-connection="0"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159334 4264 flags.go:64] FLAG: --kube-api-burst="100"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159336 4264 flags.go:64] FLAG: --kube-api-content-type="application/vnd.kubernetes.protobuf"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159339 4264 flags.go:64] FLAG: --kube-api-qps="50"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159344 4264 flags.go:64] FLAG: --kubeconfig="/opt/kubernetes/cfg/kube-scheduler.kubeconfig"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159355 4264 flags.go:64] FLAG: --leader-elect="true"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159357 4264 flags.go:64] FLAG: --leader-elect-lease-duration="15s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159359 4264 flags.go:64] FLAG: --leader-elect-renew-deadline="10s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159360 4264 flags.go:64] FLAG: --leader-elect-resource-lock="leases"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159362 4264 flags.go:64] FLAG: --leader-elect-resource-name="kube-scheduler"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159364 4264 flags.go:64] FLAG: --leader-elect-resource-namespace="kube-system"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159366 4264 flags.go:64] FLAG: --leader-elect-retry-period="2s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159367 4264 flags.go:64] FLAG: --log-flush-frequency="5s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159369 4264 flags.go:64] FLAG: --log-json-info-buffer-size="0"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159375 4264 flags.go:64] FLAG: --log-json-split-stream="false"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159376 4264 flags.go:64] FLAG: --log-text-info-buffer-size="0"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159378 4264 flags.go:64] FLAG: --log-text-split-stream="false"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159380 4264 flags.go:64] FLAG: --logging-format="text"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159381 4264 flags.go:64] FLAG: --master=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159383 4264 flags.go:64] FLAG: --permit-address-sharing="false"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159385 4264 flags.go:64] FLAG: --permit-port-sharing="false"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159387 4264 flags.go:64] FLAG: --pod-max-in-unschedulable-pods-duration="5m0s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159388 4264 flags.go:64] FLAG: --profiling="true"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159390 4264 flags.go:64] FLAG: --requestheader-allowed-names="[]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159393 4264 flags.go:64] FLAG: --requestheader-client-ca-file=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159396 4264 flags.go:64] FLAG: --requestheader-extra-headers-prefix="[x-remote-extra-]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159399 4264 flags.go:64] FLAG: --requestheader-group-headers="[x-remote-group]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159410 4264 flags.go:64] FLAG: --requestheader-username-headers="[x-remote-user]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159415 4264 flags.go:64] FLAG: --secure-port="10259"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159417 4264 flags.go:64] FLAG: --show-hidden-metrics-for-version=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159419 4264 flags.go:64] FLAG: --tls-cert-file=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159420 4264 flags.go:64] FLAG: --tls-cipher-suites="[]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159423 4264 flags.go:64] FLAG: --tls-min-version=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159424 4264 flags.go:64] FLAG: --tls-private-key-file=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159426 4264 flags.go:64] FLAG: --tls-sni-cert-key="[]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159431 4264 flags.go:64] FLAG: --v="2"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159434 4264 flags.go:64] FLAG: --version="false"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159436 4264 flags.go:64] FLAG: --vmodule=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.159438 4264 flags.go:64] FLAG: --write-config-to=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: I0721 14:30:18.372614 4264 serving.go:380] Generated self-signed cert in-memory

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4264]: E0721 14:30:18.372992 4264 run.go:74] "command failed" err="invalid configuration: no configuration has been provided, try setting KUBERNETES_MASTER environment variable"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Main process exited, code=exited, status=1/FAILURE

Jul 21 14:30:18 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Failed with result 'exit-code'.

Jul 21 14:30:18 k8s-master-u2404-4-20-101 systemd[1]: kube-scheduler.service: Scheduled restart job, restart counter is at 3.

Jul 21 14:30:18 k8s-master-u2404-4-20-101 systemd[1]: Started kube-scheduler.service - Kubernetes Scheduler.

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657347 4273 flags.go:64] FLAG: --allow-metric-labels="[]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657431 4273 flags.go:64] FLAG: --allow-metric-labels-manifest=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657434 4273 flags.go:64] FLAG: --authentication-kubeconfig=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657437 4273 flags.go:64] FLAG: --authentication-skip-lookup="false"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657439 4273 flags.go:64] FLAG: --authentication-token-webhook-cache-ttl="10s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657443 4273 flags.go:64] FLAG: --authentication-tolerate-lookup-failure="true"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657444 4273 flags.go:64] FLAG: --authorization-always-allow-paths="[/healthz,/readyz,/livez]"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657448 4273 flags.go:64] FLAG: --authorization-kubeconfig=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657449 4273 flags.go:64] FLAG: --authorization-webhook-cache-authorized-ttl="10s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657450 4273 flags.go:64] FLAG: --authorization-webhook-cache-unauthorized-ttl="10s"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657452 4273 flags.go:64] FLAG: --bind-address="127.0.0.1"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657456 4273 flags.go:64] FLAG: --cert-dir=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657459 4273 flags.go:64] FLAG: --client-ca-file=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657461 4273 flags.go:64] FLAG: --config=""

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657462 4273 flags.go:64] FLAG: --contention-profiling="true"

Jul 21 14:30:18 k8s-master-u2404-4-20-101 kube-scheduler[4273]: I0721 14:30:18.657464 4273 flags.go:64] FLAG: --disabled-metrics="[]"